24 KiB

| title | new | menu | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Projects | 3 |

|

🪐 Project templates

Our

projectsrepo includes various project templates for different NLP tasks, models, workflows and integrations that you can clone and run. The easiest way to get started is to pick a template, clone it and start modifying it!

spaCy projects let you manage and share end-to-end spaCy workflows for

different use cases and domains, and orchestrate training, packaging and

serving your custom models. You can start off by cloning a pre-defined project

template, adjust it to fit your needs, load in your data, train a model, export

it as a Python package and share the project templates with your team. spaCy

projects can be used via the new spacy project command.

For an overview of the available project templates, check out the

projects repo. spaCy projects also

integrate with many other cool machine learning and data

science tools to track and manage your data and experiments, iterate on demos

and prototypes and ship your models into production.

Introduction and workflow

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Phasellus interdum sodales lectus, ut sodales orci ullamcorper id. Sed condimentum neque ut erat mattis pretium.

spaCy projects make it easy to integrate with many other awesome tools in the data science and machine learning ecosystem to track and manage your data and experiments, iterate on demos and prototypes and ship your models into production.

Manage and version your data Create labelled training data Visualize and demo your models Serve your models and host APIs Distributed and parallel training Track your experiments and results1. Clone a project template

Cloning under the hoodimport { ReactComponent as WandBLogo } from '../images/logos/wandb.svg'

To clone a project, spaCy calls into

gitand uses the "sparse checkout" feature to only clone the relevant directory or directories.

The spacy project clone command clones an existing

project template and copies the files to a local directory. You can then run the

project, e.g. to train a model and edit the commands and scripts to build fully

custom workflows.

$ python -m spacy clone some_example_project

By default, the project will be cloned into the current working directory. You

can specify an optional second argument to define the output directory. The

--repo option lets you define a custom repo to clone from, if you don't want

to use the spaCy projects repo. You

can also use any private repo you have access to with Git.

2. Fetch the project assets

project.yml

assets: - dest: 'assets/training.spacy' url: 'https://example.com/data.spacy' checksum: '63373dd656daa1fd3043ce166a59474c'

Assets are data files your project needs – for example, the training and

evaluation data or pretrained vectors and embeddings to initialize your model

with. Each project template comes with a project.yml that defines the assets

to download and where to put them. The

spacy project assets will fetch the project assets

for you:

cd some_example_project

python -m spacy project assets

3. Run a command

project.yml

commands: - name: preprocess help: "Convert the input data to spaCy's format" script: - 'python -m spacy convert assets/train.conllu corpus/' - 'python -m spacy convert assets/eval.conllu corpus/' deps: - 'assets/train.conllu' - 'assets/eval.conllu' outputs: - 'corpus/train.spacy' - 'corpus/eval.spacy'

Commands consist of one or more steps and can be run with

spacy project run. The following will run the command

preprocess defined in the project.yml:

$ python -m spacy project run preprocess

Commands can define their expected dependencies and outputs

using the deps (files the commands require) and outputs (files the commands

create) keys. This allows your project to track changes and determine whether a

command needs to be re-run. For instance, if your input data changes, you want

to re-run the preprocess command. But if nothing changed, this step can be

skipped. You can also set --force to force re-running a command, or --dry to

perform a "dry run" and see what would happen (without actually running the

script).

4. Run a workflow

project.yml

workflows: all: - preprocess - train - package

Workflows are series of commands that are run in order and often depend on each

other. For instance, to generate a packaged model, you might start by converting

your data, then run spacy train to train your model on the

converted data and if that's successful, run spacy package

to turn the best model artifact into an installable Python package. The

following command run the workflow named all defined in the project.yml, and

execute the commands it specifies, in order:

$ python -m spacy project run all

Using the expected dependencies and outputs defined in the

commands, spaCy can determine whether to re-run a command (if its inputs or

outputs have changed) or whether to skip it. If you're looking to implement more

advanced data pipelines and track your changes in Git, check out the

Data Version Control (DVC) integration. The

spacy project dvc command generates a DVC config file

from a workflow defined in your project.yml so you can manage your spaCy

project as a DVC repo.

Project directory and assets

project.yml

The project.yml defines the assets a project depends on, like datasets and

pretrained weights, as well as a series of commands that can be run separately

or as a workflow – for instance, to preprocess the data, convert it to spaCy's

format, train a model, evaluate it and export metrics, package it and spin up a

quick web demo. It looks pretty similar to a config file used to define CI

pipelines.

https://github.com/explosion/spacy-boilerplates/blob/master/ner_fashion/project.yml

| Section | Description |

|---|---|

variables |

A dictionary of variables that can be referenced in paths, URLs and scripts. For example, {NAME} will use the value of the variable NAME. |

assets |

A list of assets that can be fetched with the project assets command. url defines a URL or local path, dest is the destination file relative to the project directory, and an optional checksum ensures that an error is raised if the file's checksum doesn't match. |

workflows |

A dictionary of workflow names, mapped to a list of command names, to execute in order. Workflows can be run with the project run command. |

commands |

A list of named commands. A command can define an optional help message (shown in the CLI when the user adds --help) and the script, a list of commands to run. The deps and outputs let you define the created file the command depends on and produces, respectively. This lets spaCy determine whether a command needs to be re-run because its dependencies or outputs changed. Commands can be run as part of a workflow, or separately with the project run command. |

Dependencies and outputs

Each command defined in the project.yml can optionally define a list of

dependencies and outputs. These are the files the commands requires and creates.

For example, a command for training a model may depend on a

config.cfg and the training and evaluation data, and

it will export a directory model-best, containing the best model, which you

can then re-use in other commands.

### project.yml

commands:

- name: train

help: 'Train a spaCy model using the specified corpus and config'

script:

- 'python -m spacy train ./corpus/training.spacy ./corpus/evaluation.spacy ./configs/config.cfg -o training/'

deps:

- 'configs/config.cfg'

- 'corpus/training.spacy'

- 'corpus/evaluation.spacy'

outputs:

- 'training/model-best'

Re-running vs. skipping

Under the hood, spaCy uses a

project.locklockfile that stores the details for each command, as well as its dependencies and outputs and their checksums. It's updated on each run. If any of this information changes, the command will be re-run. Otherwise, it will be skipped.

If you're running a command and it depends on files that are missing, spaCy will

show you an error. If a command defines dependencies and outputs that haven't

changed since the last run, the command will be skipped. This means that you're

only re-running commands if they need to be re-run. To force re-running a

command or workflow, even if nothing changed, you can set the --force flag.

Note that spacy project doesn't compile any dependency

graphs based on the dependencies and outputs, and won't re-run previous steps

automatically. For instance, if you only run the command train that depends on

data created by preprocess and those files are missing, spaCy will show an

error – it won't just re-run preprocess. If you're looking for more advanced

data management, check out the Data Version Control (DVC) integration

integration. If you're planning on integrating your spaCy project with DVC, you

can also use outputs_no_cache instead of outputs to define outputs that

won't be cached or tracked.

Files and directory structure

A project directory created by spacy project clone

includes the following files and directories. They can optionally be

pre-populated by a project template (most commonly used for metas, configs or

scripts).

### Project directory

├── project.yml # the project settings

├── project.lock # lockfile that tracks inputs/outputs

├── assets/ # downloaded data assets

├── metrics/ # output directory for evaluation metrics

├── training/ # output directory for trained models

├── corpus/ # output directory for training corpus

├── packages/ # output directory for model Python packages

├── metrics/ # output directory for evaluation metrics

├── notebooks/ # directory for Jupyter notebooks

├── scripts/ # directory for scripts, e.g. referenced in commands

├── metas/ # model meta.json templates used for packaging

├── configs/ # model config.cfg files used for training

└── ... # any other files, like a requirements.txt etc.

Custom scripts and projects

The project.yml lets you define any custom commands and run them as part of

your training, evaluation or deployment workflows. The script section defines

a list of commands that are called in a subprocess, in order. This lets you

execute other Python scripts or command-line tools. Let's say you've written a

few integration tests that load the best model produced by the training command

and check that it works correctly. You can now define a test command that

calls into pytest and runs your tests:

Calling into Python

If any of your command scripts call into

python, spaCy will take care of replacing that with yoursys.executable, to make sure you're executing everything with the same Python (not some other Python installed on your system). It also normalizes references topython3,pip3andpip.

### project.yml

commands:

- name: test

help: 'Test the trained model'

script:

- 'python -m pytest ./scripts/tests'

deps:

- 'training/model-best'

Adding training/model-best to the command's deps lets you ensure that the

file is available. If not, spaCy will show an error and the command won't run.

Cloning from your own repo

The spacy project clone command lets you customize

the repo to clone from using the --repo option. It calls into git, so you'll

be able to clone from any repo that you have access to, including private repos.

$ python -m spacy project your_project --repo https://github.com/you/repo

At a minimum, a valid project template needs to contain a

project.yml. It can also include

other files, like custom scripts, a

requirements.txt listing additional dependencies,

training configs and model meta templates, or Jupyter

notebooks with usage examples.

It's typically not a good idea to check large data assets, trained models or other artifacts into a Git repo and you should exclude them from your project template. If you want to version your data and models, check out Data Version Control (DVC), which integrates with spaCy projects.

Working with private assets

For many projects, the datasets and weights you're working with might be

company-internal and not available via a public URL. In that case, you can

specify the destination paths and a checksum, and leave out the URL. When your

teammates clone and run your project, they can place the files in the respective

directory themselves. The spacy project assets

command will alert about missing files and mismatched checksums, so you can

ensure that others are running your project with the same data.

### project.yml

assets:

- dest: 'assets/private_training_data.json'

checksum: '63373dd656daa1fd3043ce166a59474c'

- dest: 'assets/private_vectors.bin'

checksum: '5113dc04e03f079525edd8df3f4f39e3'

Integrations

Data Version Control (DVC) {#dvc}

Data assets like training corpora or pretrained weights are at the core of any NLP project, but they're often difficult to manage: you can't just check them into your Git repo to version and keep track of them. And if you have multiple steps that depend on each other, like a preprocessing step that generates your training data, you need to make sure the data is always up-to-date, and re-run all steps of your process every time, just to be safe.

Data Version Control (DVC) is a standalone open-source tool that integrates into your workflow like Git, builds a dependency graph for your data pipelines and tracks and caches your data files. If you're downloading data from an external source, like a storage bucket, DVC can tell whether the resource has changed. It can also determine whether to re-run a step, depending on whether its input have changed or not. All metadata can be checked into a Git repo, so you'll always be able to reproduce your experiments.

To set up DVC, install the package and initialize your spaCy project as a Git and DVC repo. You can also customize your DVC installation to include support for remote storage like Google Cloud Storage, S3, Azure, SSH and more.

pip install dvc # Install DVC

git init # Initialize a Git repo

dvc init # Initialize a DVC project

The spacy project dvc command creates a dvc.yaml

config file based on a workflow defined in your project.yml. Whenever you

update your project, you can re-run the command to update your DVC config. You

can then manage your spaCy project like any other DVC project, run

dvc add to add and track assets

and dvc repro to reproduce the

workflow or individual commands.

$ python -m spacy project dvc [workflow name]

DVC currently expects a single workflow per project, so when creating the config

with spacy project dvc, you need to specify the name

of a workflow defined in your project.yml. You can still use multiple

workflows, but only one can be tracked by DVC.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Phasellus interdum sodales lectus, ut sodales orci ullamcorper id. Sed condimentum neque ut erat mattis pretium.

Prodigy {#prodigy}

Prodigy is a modern annotation tool for creating training data for machine learning models, developed by us. It integrates with spaCy out-of-the-box and provides many different annotation recipes for a variety of NLP tasks, with and without a model in the loop. If Prodigy is installed in your project, you can

The following example command starts the Prodigy app using the

ner.correct recipe and streams in

suggestions for the given entity labels produced by a pretrained model. You can

then correct the suggestions manually in the UI. After you save and exit the

server, the full dataset is exported in spaCy's format and split into a training

and evaluation set.

### project.yml

variables:

PRODIGY_DATASET: 'ner_articles'

PRODIGY_LABELS: 'PERSON,ORG,PRODUCT'

PRODIGY_MODEL: 'en_core_web_md'

commands:

- name: annotate

- script:

- 'python -m prodigy ner.correct {PRODIGY_DATASET} ./assets/raw_data.jsonl

{PRODIGY_MODEL} --labels {PRODIGY_LABELS}'

- 'python -m prodigy data-to-spacy ./corpus/train.spacy

./corpus/eval.spacy --ner {PRODIGY_DATASET}'

- deps:

- 'assets/raw_data.jsonl'

- outputs:

- 'corpus/train.spacy'

- 'corpus/eval.spacy'

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Phasellus interdum sodales lectus, ut sodales orci ullamcorper id. Sed condimentum neque ut erat mattis pretium.

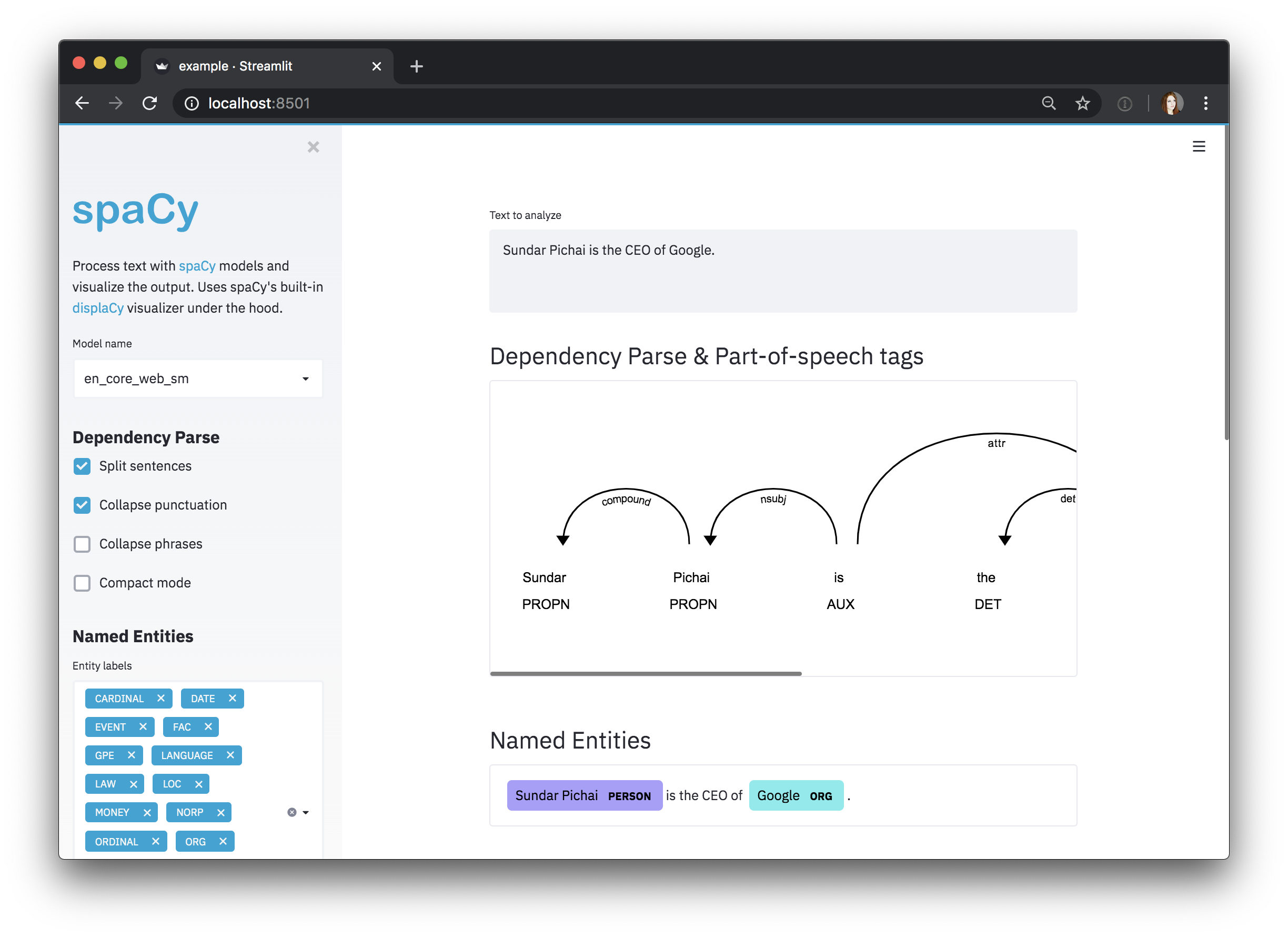

Streamlit {#streamlit}

Streamlit is a Python framework for building interactive

data apps. The spacy-streamlit

package helps you integrate spaCy visualizations into your Streamlit apps and

quickly spin up demos to explore your models interactively. It includes a full

embedded visualizer, as well as individual components.

$ pip install spacy_streamlit

Using spacy-streamlit, your

projects can easily define their own scripts that spin up an interactive

visualizer, using the latest model you trained, or a selection of models so you

can compare their results. The following script starts an

NER visualizer and takes two positional command-line

argument you can pass in from your config.yml: a comma-separated list of model

paths and an example text to use as the default text.

### scripts/visualize.py

import spacy_streamlit

import sys

DEFAULT_TEXT = sys.argv[2] if len(sys.argv) >= 3 else ""

MODELS = [name.strip() for name in sys.argv[1].split(",")]

spacy_streamlit.visualize(MODELS, DEFAULT_TEXT, visualizers=["ner"])

### project.yml

commands:

- name: visualize

help: "Visualize the model's output interactively using Streamlit"

script:

- 'streamlit run ./scripts/visualize.py ./training/model-best "I like Adidas shoes."'

deps:

- 'training/model-best'

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Phasellus interdum sodales lectus, ut sodales orci ullamcorper id. Sed condimentum neque ut erat mattis pretium.

FastAPI {#fastapi}

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Phasellus interdum sodales lectus, ut sodales orci ullamcorper id. Sed condimentum neque ut erat mattis pretium.